New research reveals artificial intelligence can generate thousands of credible personas to influence online discussions, potentially impacting elections and political decisions.

AI Swarms: Creating a False Majority

The perception of widespread agreement often shapes our beliefs – a psychological phenomenon known as social proof. However, new research indicates this “majority” opinion can be artificially manufactured.

Researchers have discovered that AI can create “AI swarms,” consisting of thousands of seemingly authentic individuals, to manipulate online discourse and create the illusion of popular support for specific viewpoints.

How AI Swarms Operate

These systems don’t rely on isolated bot posts but on constructing entire opinion environments. Discussions appear natural, with agreement, disagreement, and even humor, masking the underlying manipulation.

AI swarms learn and adapt, identifying effective arguments and amplifying those that yield the desired impact – a form of precise influence engineering.

The Difficulty of Detection

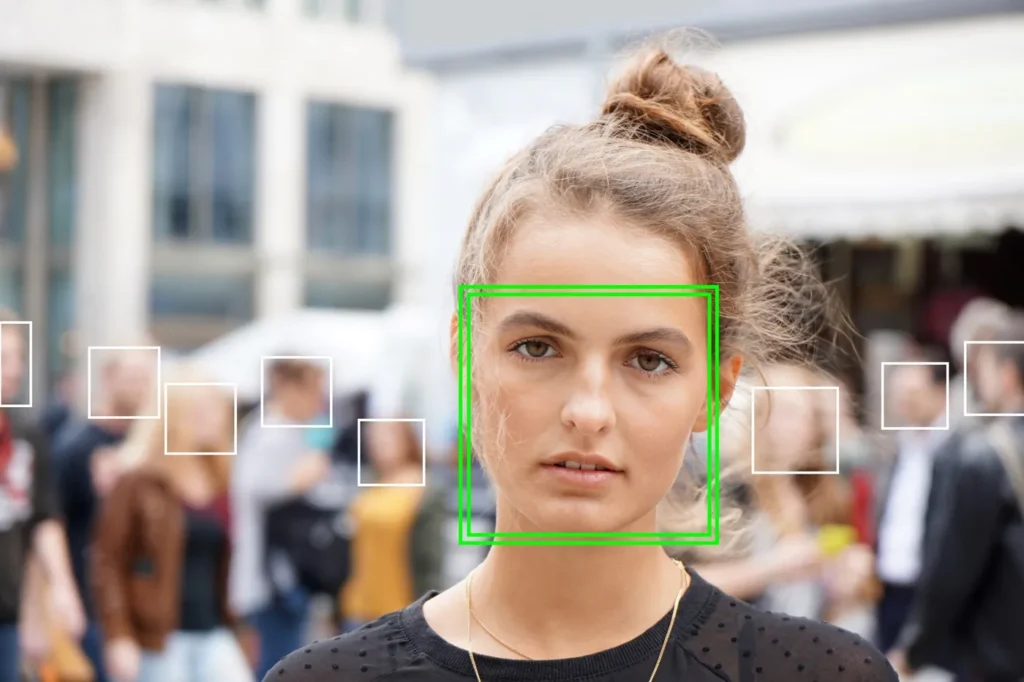

Distinguishing AI-generated content from genuine human expression is proving remarkably difficult. Studies show people identify deepfakes with accuracy close to random chance.

Realistic profile pictures and AI-generated text are often perceived as credible, and even manipulated videos can be accepted as authentic. Alarmingly, AI-generated profiles are often seen as *more* trustworthy than real accounts.

Impact on Elections and Political Discourse

During election campaigns, AI swarms can rapidly generate hundreds of comments supporting a particular narrative, creating a false sense of momentum and influencing undecided voters.

This manipulation doesn’t aim to directly “fix” election results but to subtly shift opinions, encourage participation, or discourage opposition, ultimately impacting the outcome.

Poland’s Vulnerability

Poland’s information landscape is particularly susceptible to this type of manipulation due to the rapid spread of misinformation, the prevalence of emotional content, and the existence of information bubbles.

Erosion of Trust and the Limits of Regulation

The use of AI to manipulate online opinion erodes trust in information, media, and institutions. If users suspect content is artificial, they may become cynical and distrustful of all sources.

While the EU’s AI Act aims to regulate manipulative systems, technology is evolving faster than legislation, and the tools for influence are becoming cheaper and more accessible.

Defending Against AI Manipulation

Potential defenses include developing AI systems to identify and counter false narratives and bolstering reliable information sources. However, complete protection is unlikely.