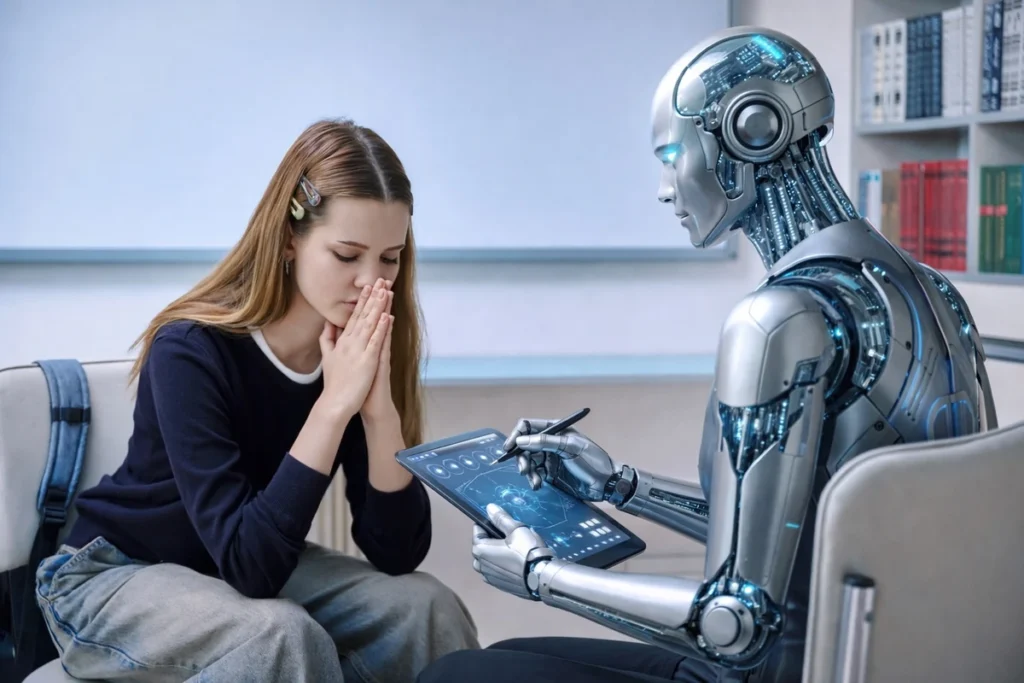

Polish patients increasingly use AI chatbots for therapy, raising questions about boundaries between tools and therapeutic relationships.

Rising Popularity of Chatbots in Therapy

Polish patients are increasingly turning to general chatbots for therapeutic support, though specialized therapeutic tools in Polish are not yet widely available. While therapists acknowledge the 24/7 accessibility of chatbots, they express concerns about the fundamental differences between human interaction and AI responses.

Therapeutic Approaches and Technology

Cognitive-behavioral therapy (CBT) may be more adaptable to technological support due to its structured, measurable nature. However, psychodynamic, systemic, and analytic therapies rely heavily on the relationship between therapist and patient, including transference and countertransference—elements that algorithms cannot replace.

Potential Benefits in Limited Access Areas

Chatbots could provide valuable support in regions with limited therapy access, particularly for elderly individuals with mobility challenges. In such cases, chatbots might reduce feelings of loneliness, though questions about boundaries and responsibility remain important considerations.

Crisis Situations and Risk Management

One of the most significant risks involves crisis situations, such as suicidal thoughts. While suicide is a leading cause of death among young people and more fatal among older individuals, questions arise about whether chatbots can properly identify threats and direct users to appropriate help. The legal responsibility of chatbots versus human therapists presents a fundamental difference.

The Risk of Delayed Professional Help

Patients may develop preferences for chatbots due to their agreeable nature, as algorithms tend to follow user narratives rather than provide therapeutic confrontation. This could potentially delay patients from seeking professional help, with chatbot conversations becoming substitutes rather than bridges to real therapy.

Non-Verbal Communication and Clinical Intuition

A significant portion of therapy involves non-verbal cues—speaking pace, pauses, voice tremors, body movements, and facial expressions—that convey crucial information. Chatbots, which respond only to text, cannot detect these signs, though future technology might analyze voice characteristics or language structure for diagnostic purposes.

Understanding Complex Psychological Conditions

Chatbots may inadvertently reinforce paranoid or grandiose thinking by entering the patient’s narrative rather than gently challenging it. In therapy, there’s a subtle balance between not confronting brutally and not delusionally collaborating—a distinction that algorithms might not recognize.

The Importance of Discussion in Therapy

When patients use chatbots, discussing this in the therapeutic relationship can provide valuable material for treatment. However, when a parallel, undiscussed relationship forms with a chatbot as a “second therapist,” it may weaken the therapeutic process, especially when the chatbot supports narratives the therapist is critically examining.

Fundamentally Human Elements in Therapy

What distinguishes human therapists from AI includes multidimensional contact, experiential empathy, responsibility, and clinical intuition. From the moment a patient enters the office, humans observe walking patterns, body tension, and seating—all diagnostically significant elements that algorithms simply cannot replicate.

The Current Position on AI in Therapy

General chatbots should not be considered forms of therapy. While they can provide information and symptom explanations, specialized therapeutic tools require cautious consideration. They might serve as support in therapy-scarce areas, but the therapeutic relationship remains irreplaceable in psychiatry and psychotherapy.